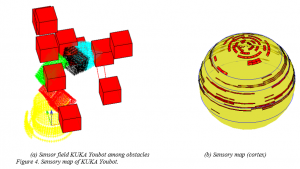

We develop spatial cognition of robot based on its proprioception. This is the quintessence of human and robot co-existence in which robots should sense, understand and make decisions about their environment in the same way human beings do. The objects in the workspace of the robot are classified and labelled based on hierarchical feature classification techniques. Using the measurement by proprioceptive sensors, virtual somatic sensors are located on the robot based on Nyquist sampling criterion. A self-map of the robot based on the inputs from the virtual somatic sensors is used for the representation of the neighboring space. The self-map has different regions representing different parts of the robot. This is same as the somatosensory cortex of human brain where the sensory information from organs are received and processed. Similar to human brain, different parts of this cortex differ in sizes due to the varying sensitivities of the organs. The sensitivity of each region of the self-map depends on how many sensors are located in that region.

This sensory information is transformed to suitable form for various purposes. For example, navigation among obstacles using Markov decision process (MDP). This information is used to estimate the value function for a non-stationary MDP with varying number of states. Markov decision process finds control policies for the robot to navigate the space. The non-stationary case is solved using stationary approximation due to paucity of methods to solve non-stationary problems.

Researcher: Dr. Pramod Chembrammel